Ask ChatGPT to write a sonnet about photosynthesis. Ask Midjourney to paint a Victorian-era city from the perspective of a bird. Ask GitHub Copilot to write a function that sorts a list of customers by spending. They'll all produce something — often something impressively good — in seconds. What they've just done wasn't retrieval. It was creation. That's what makes generative AI genuinely different.

Generative AI is the most significant technological development since the smartphone, and its core principles are surprisingly understandable. This guide explains what generative AI is, how the major categories work, what they can and cannot do, and why they matter so much.

What Makes AI 'Generative'?

Traditional AI systems are primarily discriminative — they classify, sort, and find patterns in existing data. A spam filter decides whether an email is spam. An image classifier decides whether a photo contains a dog. A fraud detection system decides whether a transaction is suspicious. These are enormously useful capabilities, but they work with what already exists.

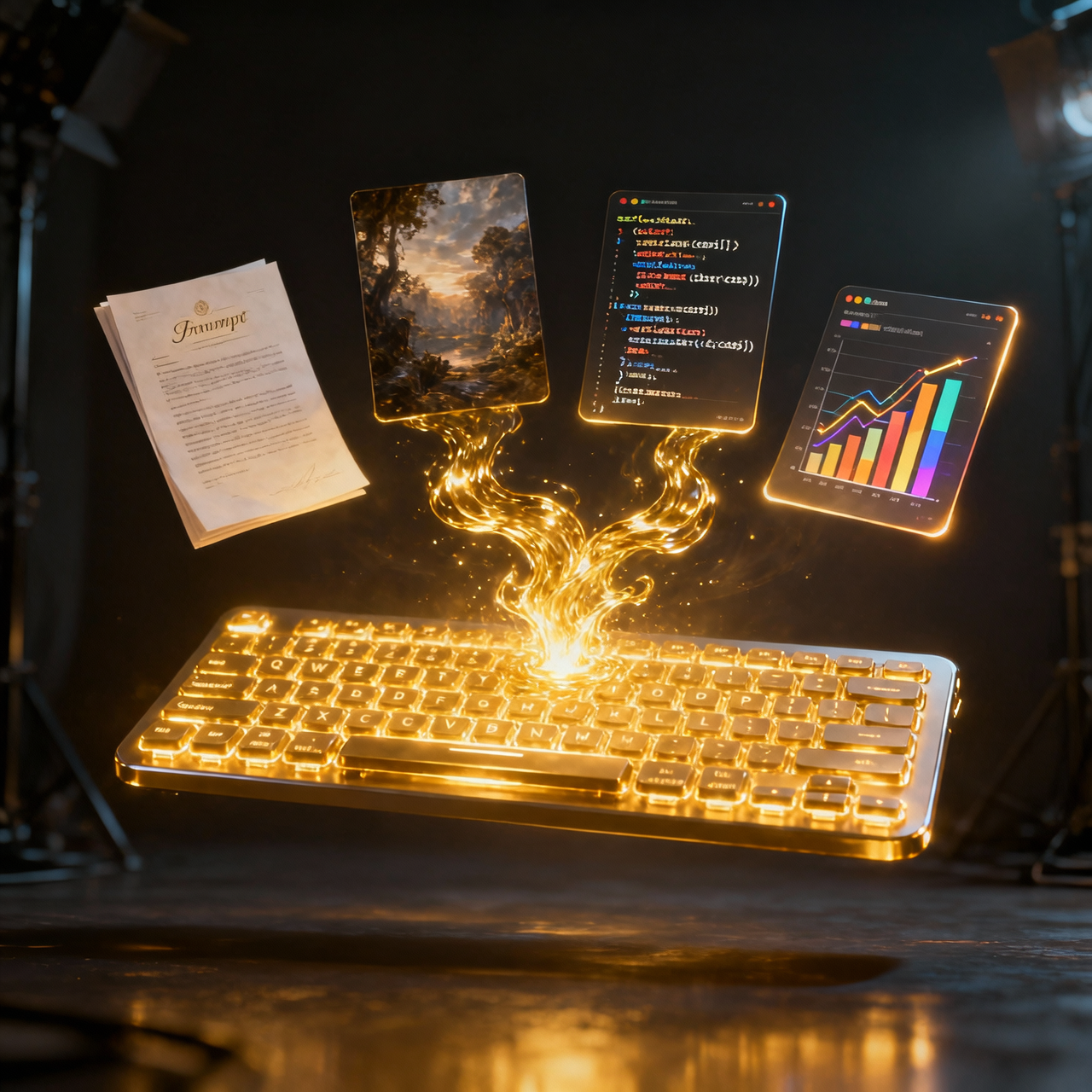

Generative AI creates new content. It doesn't retrieve from a database or rearrange existing pieces — it synthesizes genuinely new outputs that didn't exist before. A generative text model produces new sentences. A generative image model produces new images. A generative music model produces new audio. This creative capacity represents a fundamental expansion of what AI can do.

How Large Language Models Work

ChatGPT, Claude, Gemini, and other AI chatbots are built on large language models (LLMs). Understanding how they work requires understanding one key concept: these models don't store facts the way a database does. They learn statistical patterns about language from vast amounts of text.

The Transformer Architecture

Modern LLMs are built on a neural network architecture called the transformer, introduced in a landmark 2017 Google paper. The key innovation was the attention mechanism — a way for the model to learn which parts of the context are most relevant when predicting the next word. This allowed training on much longer text sequences and dramatically improved coherence.

The Training Process

An LLM like GPT-4 is trained by processing trillions of tokens (words and word fragments) from the internet, books, and other text sources. For each position in the text, it predicts what word comes next. When it's wrong, the error is used to adjust the model's billions of parameters — the numerical weights that determine the model's behavior. After training on enough text, the model has encoded extraordinarily rich patterns about language, knowledge, reasoning, and even style.

From Training to Conversation

When you type a prompt, the trained model uses its learned parameters to generate a response word by word (technically token by token), each word conditioned on everything that came before. The model doesn't 'think' about its answer the way humans deliberate — it samples from a distribution of probable next tokens, shaped by the prompt and the patterns learned during training.

AI Image Generation: How Text Becomes Image

Image generators like DALL-E, Midjourney, and Stable Diffusion work on different but related principles. Most modern image generators use a technique called diffusion: they learn to gradually remove noise from an image, conditioned on a text description. During training, real images are gradually destroyed by adding noise. The model learns to reverse this process. Given a text description, it starts from pure noise and gradually reconstructs an image that matches the description.

The Major Categories of Generative AI

Text generation (LLMs) produces writing, code, analysis, and conversation. Image generation (diffusion models) produces visual art, photographs, and illustrations from text descriptions. Audio and music generation produces voice cloning, sound effects, and original music from textual instructions. Video generation, the newest frontier, produces short video clips from text or image prompts — OpenAI's Sora being the most publicized example. Code generation, a specialized subset of text generation, produces functional software code from natural language requirements.

What Generative AI Cannot Do

Despite remarkable capabilities, generative AI has meaningful limitations. It cannot reliably verify its own outputs for factual accuracy — leading to hallucinations. It cannot access real-time information without external tools. It has no genuine understanding, consciousness, or intentionality — it generates plausible responses based on patterns, not understanding. It cannot produce genuinely original creative work in the sense of ideas that transcend its training data. And it performs poorly on truly novel problems that require reasoning beyond what's been demonstrated in training data.

Why Generative AI Changes Everything

Generative AI changes the economics of content creation fundamentally. What previously required weeks of human creative work can now be drafted in minutes. This has implications for every industry that produces content: media, marketing, software, design, legal documents, research reports, and education materials. The constraint shifts from 'who can create this' to 'who can direct, evaluate, and take responsibility for the creation.' This is a profound shift in the nature of skilled knowledge work.

Conclusion

Generative AI is not magic, but it is genuinely revolutionary. Understanding that it works through pattern synthesis rather than knowledge retrieval, that it generates rather than retrieves, and that it creates authentic limitations around truth and novelty helps calibrate both appropriate enthusiasm and appropriate skepticism. These systems are already transforming work, creativity, and communication — and we're at the earliest stage of that transformation.